The war in Iran has been unfolding at breakneck pace ever since the US and Israel launched a series of surprise attacks across the country on February 28. An eye-watering 1,000 targets were hit in the first 24 hours of operation ‘Epic Fury’ alone, and within days, Supreme Leader Ali Khamenei and numerous other high-level Iranian officials were assassinated in targeted strikes.

In a video posted to X on March 11, Admiral Brad Cooper, head of US Central Command (CENTCOM), said that American forces had at the time hit more than 5,500 targets inside Iran. Cooper credited the success of at least part of those operations to advanced AI tools. “Humans will always make final decisions on what to shoot and what not to shoot and when to shoot. But advanced AI tools can turn processes that used to take hours and sometimes even days into seconds,” he said. The statement offered rare insight into how AI is used in modern warfare.

To display this content from YouTube, you must enable advertisement tracking and audience measurement.

One of your browser extensions seems to be blocking the video player from loading. To watch this content, you may need to disable it on this site.

© France 24

© France 24

43:35

Since announcing a $200 million defence contract in July 2025, AI company Anthropic quickly became embedded in the military's workflow and its AI model, called ‘Claude’, was the first approved to operate on classified military networks.

Then came a public squabble.

Days before the attacks on Iran, Anthropic’s leadership refused the Pentagon’s demand for “unrestricted” access to Claude. Its co-founder Dario Amodei released a public statement, saying that Anthropic could not ‘in good conscience’ accede to the requests of The Pentagon, and adding that “some uses are also simply outside the bounds of what today’s technology can safely and reliably do.”

Anthropic implied that the US Department of War was attemping to overturn two conditions: to use its AI models for mass domestic surveillance, and for fully autonomous weapons. Just hours after the statement was released, another AI company – Sam Altman’s OpenAI – swooped in and took Anthropic’s place in the Department of War.

US Secretary of War Pete Hegseth in turn banned Anthropic, and called its decision to turn down the Pentagon’s defense contract a “master class in arrogance and betrayal”, adding that his department would designate Anthropic a Supply-Chain Risk to National Security (recent reports claim that Anthropic’s AI tools remain in use despite the blacklisting, and that Pentagon staffers are reluctant to use other models). Anthropic sued the Department of War and other federal agencies in response.

Defense Secretary Pete Hegseth stands outside the Pentagon during a welcome ceremony for the Japanese defense minister at the Pentagon in Washington, Jan. 15, 2026 © Kevin Wolf, AP

Defense Secretary Pete Hegseth stands outside the Pentagon during a welcome ceremony for the Japanese defense minister at the Pentagon in Washington, Jan. 15, 2026 © Kevin Wolf, AP

The feud brough to light a side of AI that could have more worrying consequences than slop-associated-brainrot. Its role in defence is accellerating at a disquieting pace and the US is not the only government with which it is enmeshed. Israel, China, Russia, France and the UK are among the growing lists of nations incorporating large language models (LLMs) – modern AIs pre-trained on vast amounts of data – into their defence systems.

Information about how, where and why it is used is slowly begininning to trickle out.

A lack of accuracy and oversight

The US military has reportedly been using the Maven Smart System built by Palantir and Anthropic’s technology during their operations in Iran. Maven was also used by the Pentagon to capture Venezuelan President Nicolas Maduro, according to reports by the Wall Street Journal.

The precise details of its use are unlikely to be made public. Dr. Heidy Khlaaf, Chief AI Scientist at the AI Now Institute says its primary role is to streamline what’s known as a ‘kill chain’ – a military concept which identifies the sequence of an attack

“That would include surveillance, gathering intelligence, selection and then ultimately striking a target,” says Khlaaf. She says that AI could significantly reduce the time for each step, and the personell required to do it. “For example Maven, Anthropic’s and Palantir’s system, claims that their technology allowed one unit of just 20 people to do the work of 2,000 staff. Speed is what’s being sold here.”

Most of these AI tools collate, analyse and synthesise data in what is known as ‘Decisions support systems’. Khlaaf says that, theoretically, decisions support systems just make military reccomendations and require oversight. However, she adds, that oversight may not be very effective.

Dario Amodei, CEO and co-founder of Anthropic, attends the annual meeting of the World Economic Forum in Davos, Switzerland, Jan. 23, 2025. © Markus Schreiber, AP

Dario Amodei, CEO and co-founder of Anthropic, attends the annual meeting of the World Economic Forum in Davos, Switzerland, Jan. 23, 2025. © Markus Schreiber, AP

“People have what we call an automation bias, which is a tendency for humans to favor suggestions made by automated systems like AI. So that oversight is really superficial in practice, especially in the military space where the automation bias is the worst. Human beings just become rubber stamps at that point. It veers into autonomous systems technologies,” Khlaaf argues.

Autonomous weapon systems have the power to select targets and perform their function without any oversight from a human being. It’s unclear if the Pentagon’s push for unrestricted use of AI signals the use of these systems, which have the power to launch strikes on their own.

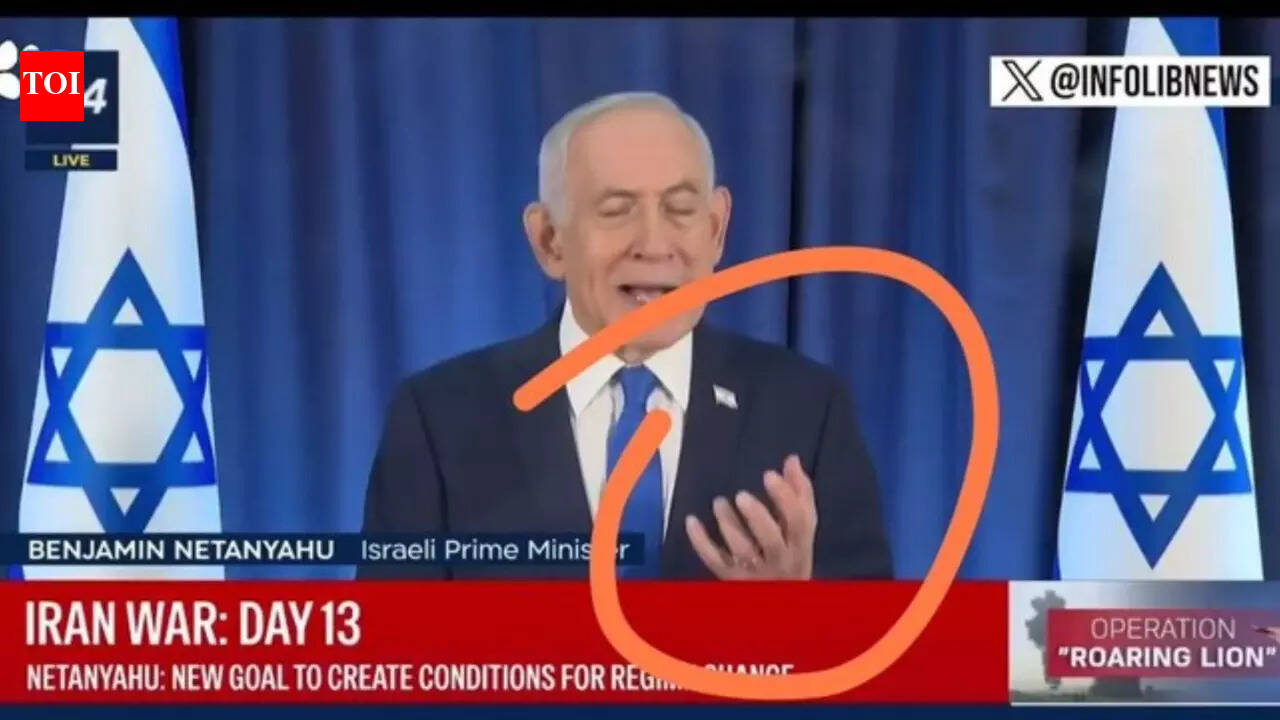

The US isn’t the only government using AI to streamline the kill chain. Before Israeli jets fired the ballistic missiles that killed Iran’s Supreme Leader Ali Khamenei, Israel’s intelligence services had long used AI to monitor Tehran’s hacked traffic cameras and intercept communications. The main platform used by the IDF today is an AI system called Habsora (“The Gospel”), which has allegedly been used to generate large numbers of target recommendations – often residential homes linked to suspected Hamas members in Gaza.

Read morePropaganda in 21st-century wars: Iran conflict flooded with AI-generated fakes

Former intelligence officers describe it as enabling a “mass assassination factory,” with strikes reportedly killing entire families, including in homes with no confirmed militant presence.

This is even more disturbing when the innacuracy rates of AI are taken into account. Khlaaf says generative AI and large language models (LLMs) often have accuracy rates as low as 50% or even lower. For targeting systems, such as those investigated in Israel's Gaza operations like Gospel or Lavender, accuracy is as low as 25-30% in some cases.

The accuracy rates are specifically low for large language models like the ones militaries are using now. “We have been using AI since the 1960s, but those were built by the military itself and worked with limited data sets that focus on specific tasks, so they were a lot more accurate.”

Khlaaf says the sheer scale of new AI models make things inherently opaque – “One model will have to analyse billions of data points. How would you know where the errors come from?”

Safety and security risks

Most AI models used in the military are ‘black boxes’ – their internal workings are a mystery to its users. Khlaaf cites a recent investigation that quoted the US military as saying it had “no way of knowing” whether it used artificial intelligence in conducting a specific airstrike in Iraq in February 2024 that killed 20-year-old student Abdul-Rahman al-Rawi.

“This is a huge problem because it completely obscures accountability. There’s no way of knowing if attacks are deliberate, if they are intelligence failures or if the AI is innacurate. The black box nature of AI makes it particularly opaque.”

That opacity won’t just hurt the people being targeted – according to Khlaaf, it could also end up putting the national security of the countries using AI at risk.

Watch moreTrump's Claude ban: The first salvo in a long battle over who controls AI

“Large language models are seriously compromised: they have huge amounts of vulnerabilities because they are trained on the open internet. There is no control of the supply chain. False claims can come from reddit, or someone's blog posts – there isn’t much discretion in picking what goes in.”

“So it’s easy to create backdoors and very hard to find out. We have seen targeted operations from Russia and China, where huge amounts of propaganda have been put out into the world to try and change the outcomes of large language models. Anthropic has even said that you need just 250 data points to change the behaviour of an AI model. We could be compromised right now, and we would’t even know.”

People clean debris from their apartment in Tehran, Iran, Sunday, March 15, 2026 © Vahid Salemi, AP

People clean debris from their apartment in Tehran, Iran, Sunday, March 15, 2026 © Vahid Salemi, AP

Perhaps one of the most worrying aspects about AI use in military comes from a recent study led by Professor Kenneth Payne from the Department of Defence Studies at King's College London. Three leading AI models, versions of GPT, Claude and Gemini, were placed in a tournament of 21 simulated nuclear crisis scenarios. The nuclear taboo, according to the paper, was weaker than expected. “Nuclear escalation was near-universal: 95% of games saw tactical nuclear use and 76% reached strategic nuclear threats,” the paper said. “Claude and Gemini especially treated nuclear weapons as legitimate strategic options, not moral thresholds, typically discussing nuclear use in purely instrumental terms.”

“Those results should give us pause,” Khlaaf says, citing the King’s College study as one sobering example. “There’s a lot of research that shows AI compromises restraint and increases the chances of escalation. This should be nowhere near human lives.”

AI is not a weapon of war, because unlike nuclear arms or missiles, the evidence of the efficacy, accuracy and full uses of AI are unknown.

“There’s a narrative that this is like an AI arms race, that the military that conquers AI will win. But we have no idea if that’s true, because we lack the evidence to show that the tech will actually serve a purpose we want,” says Khlaaf. “The US is seen as setting a precedent for AI, but it should really be a cautionary tale.”

English (US) ·

English (US) ·